Abstract

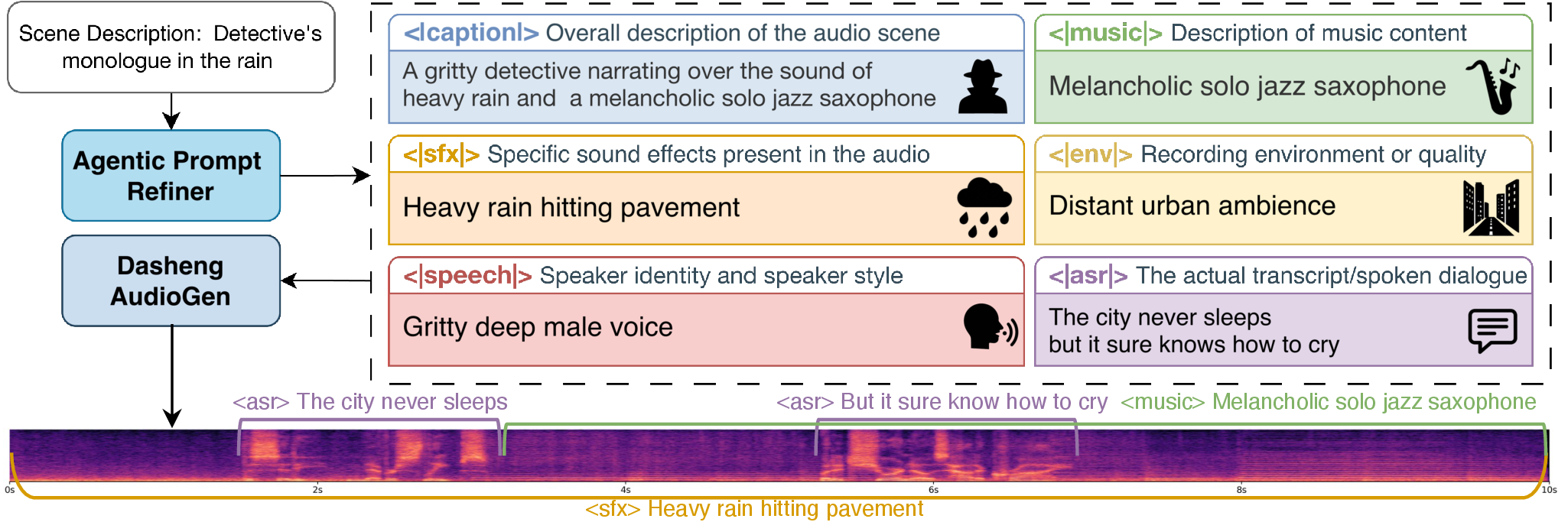

Audio generation has long been fragmented, with speech, music, and sound effects produced by domain-specific models that fail to jointly generate coherent audio scenes from a single description. We present Dasheng AudioGen, a unified framework for generating general mixed-audio scenes from text. Dasheng AudioGen introduces structured multi-view captions, which explicitly decouple complex acoustic scenes into individual descriptions for speech content, speaker style, sound effects, and music, thereby enabling fine-grained control over audio generation. Furthermore, we employ a high-dimensional unified semantic-acoustic representation (DashengTokenizer) as the shared latent space for flow matching. It injects semantic priors that facilitate cross-modal training convergence, while its high-dimensional feature space provides sufficient capacity to disentangle and fuse concurrent audio components effectively. Extensive subjective and objective experiments demonstrate that Dasheng AudioGen achieves performance approaching real-world recordings in mixed-audio categories, while remaining competitive with specialized expert models in single-type generation tasks. Demos and model checkpoints are available at this project page.